The problem

With the rapid rise of AI, our team was tasked with leveraging the latest LLM to increase engagement in Ansarada.

Rather than using AI to deliver functional improvements, we focused on using AI smarts to solve a strategic problem for our key users (Analysts and CFO’s): time wasted synthesising reports and exports to make decisions during due diligence.

We addressed this by launching Aida, our ‘Ansaradan’ AI assistant who delivers on-demand insights inside the data room.

Launching AI in Ansarada

August 2025 - Present

Outcome & impact

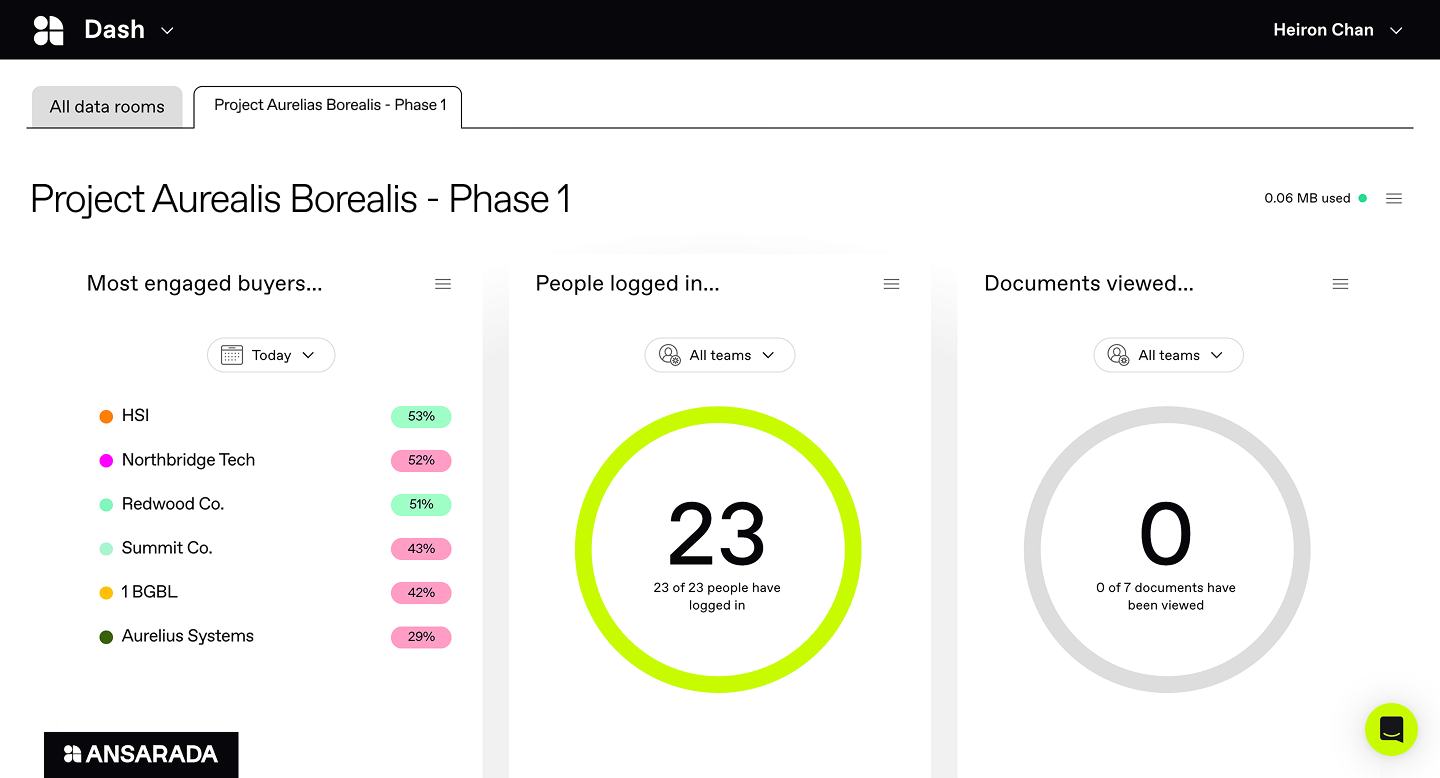

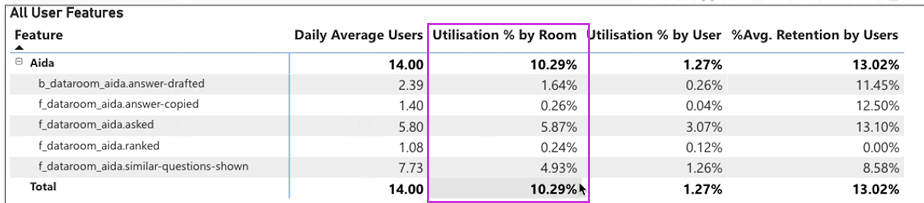

10.3% of all active data rooms are using Aida as of January 2026 (+4% since launch).

28% of usage has been from our target user and persona, Analysts.

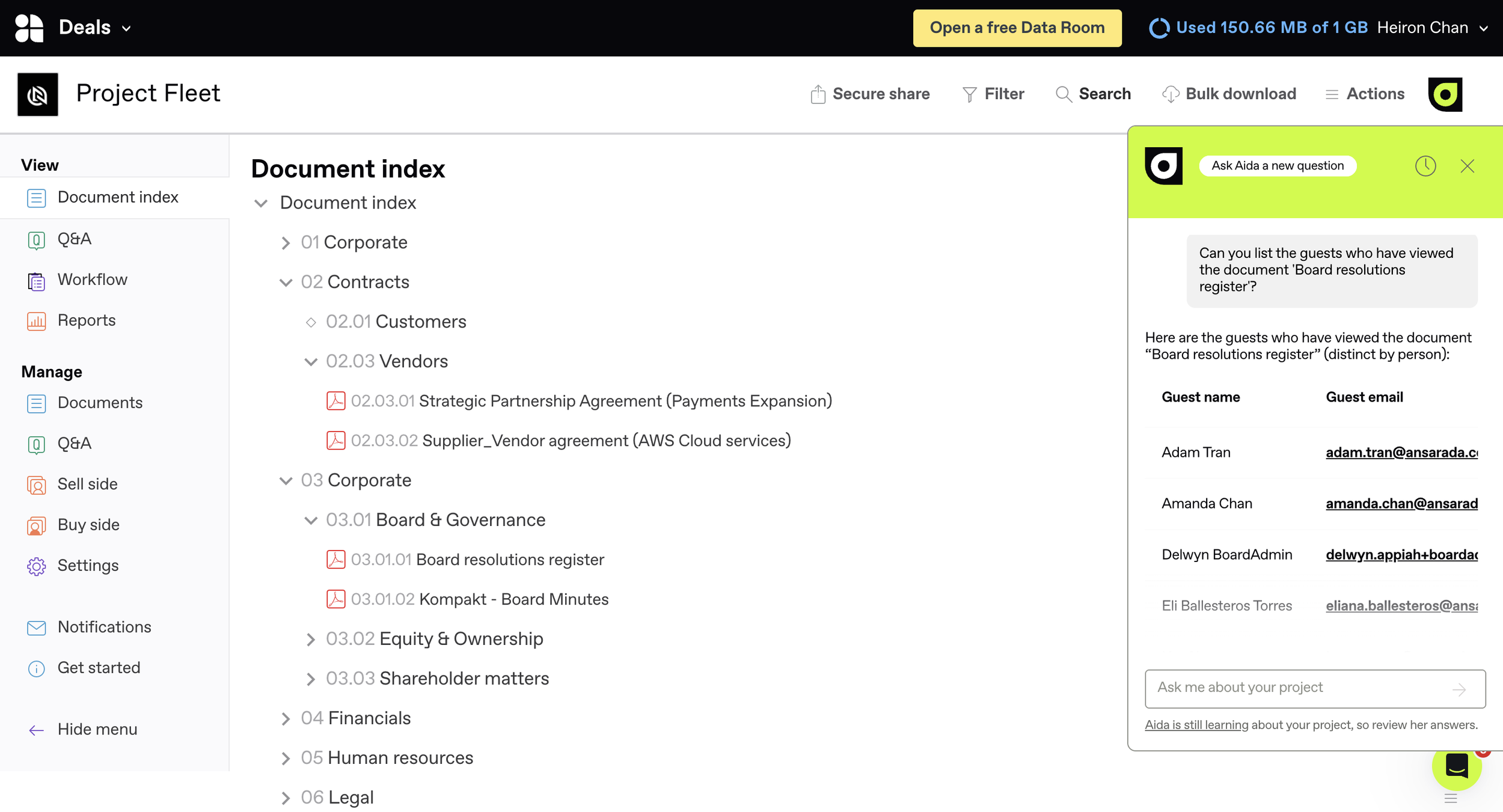

Aida can answer questions from all the main data sources in the data room: The Document index, Q&A and People activity.

In 3 releases, our team and I have significantly improved Aida, transforming her from delivering simple document and Q&A insights in October to answering semantic questions about historical activity and information; our team and I also extended her capabilities to help procure customers in December too.

In our upcoming release our team are extending Ansarada’s proprietary machine learning algorithm to the project dash, enabing executives like CEO’s and CFO’s to, at a glance, predict the likelihood of their deal succeeding.

My design process

Rapid discovery research

We only had six weeks to design and ship Aida for the first release, but there was no shared understanding of what AI could reliably produce with Ansarada’s data.

Before I could design anything, our team and I needed clarity and agree on our vision and work backwards to find out what was possible.

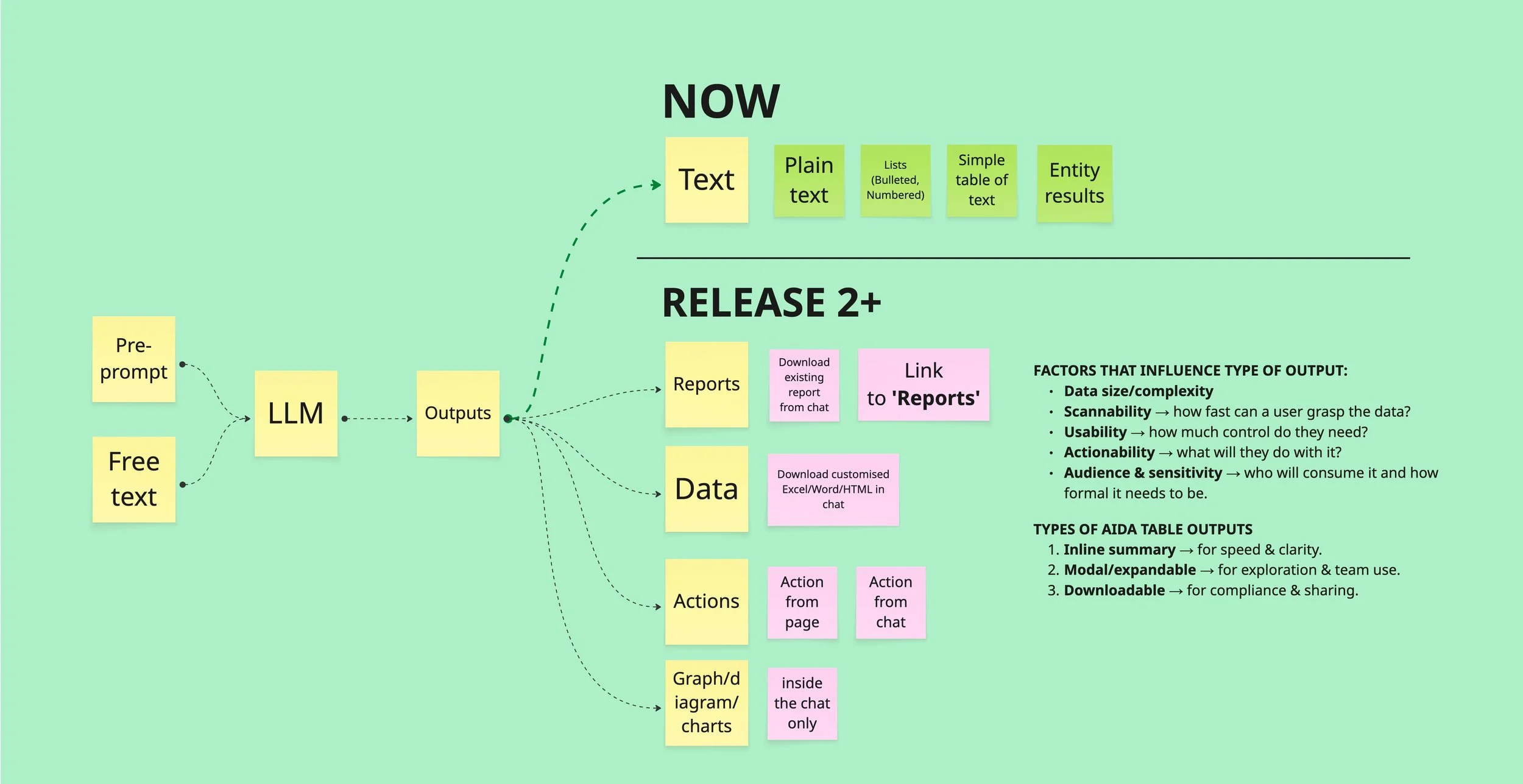

To address this gap, I researched and catalogued all of the different response types LLM’s could generate, mapping those outputs to the data sources we could connect for Release 1.

I then turned my observations into a capability map which plotted how user prompts in Ansarada would translate into AI outputs, reports, data, and actions. This became a shared artefact that I used to align on scope and feasibility with product and engineering.

This work then became the foundation for our Release 1 product requirements and engineering estimates. By removing uncertainty and scope early, the team could commit to a six-week delivery plan and I could focus my design work on the most important interactions for the immediate release.

Designing in parallel with engineering

With the scope and AI capabilities defined, the next challenge was moving designs fast enough for engineering to start estimations and development. I immediately set up daily design reviews with our Chief of Product and Design and other teammates to progress designs together.

These sessions were used to quickly pressure-test interactions, validate feasibility with engineering and lock down decisions so that important estimates and development could begin ASAP.

This ensured that while the animation and brand of Aida was being developed by an external agency, the underlying experience, flows, and AI behaviours were stable enough for engineers to implement.

Challenging engineers to scale and improve Aida

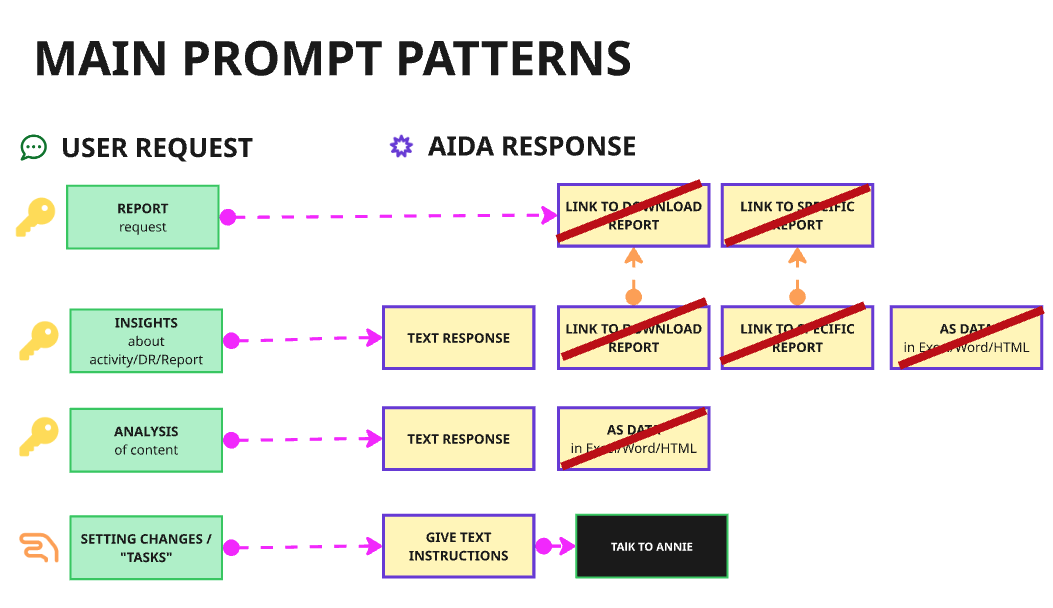

Our initial vision was to enable customers to freely query reports and data using Aida, however, Engineers deemed it as unfeasible for first release, so we had to launch and build Aida with a controlled set of pre-written prompts.

Rather than locking this in as a permanent and unscalable compromise, I kept challenging and questioning our team to revisit the original vision during retros, planning and testing.

As we were delivering Release 2, my persistence paid off, as the engineers discovered they could in fact, securely connect Aida to the desired data warehouses with the implementation of RAG (Retrieval-Augmented Generation), unlocking a significantly improved and scalable way to deliver insights to our customers.

To have influenced this outcome, I have had to be highly involved in the AI QA and system prompt design process, involving myself as one of the quality gates for reliability, tone, and trust.

I continue to work closely with the product manager, engineers and QA to test outputs, refine system prompts, and monitor how Aida responds in real scenarios.

How Aida works in Ansarada

Our goal with Aida was not to just add a standalone AI tool, but to also make Aida a memorable experience.

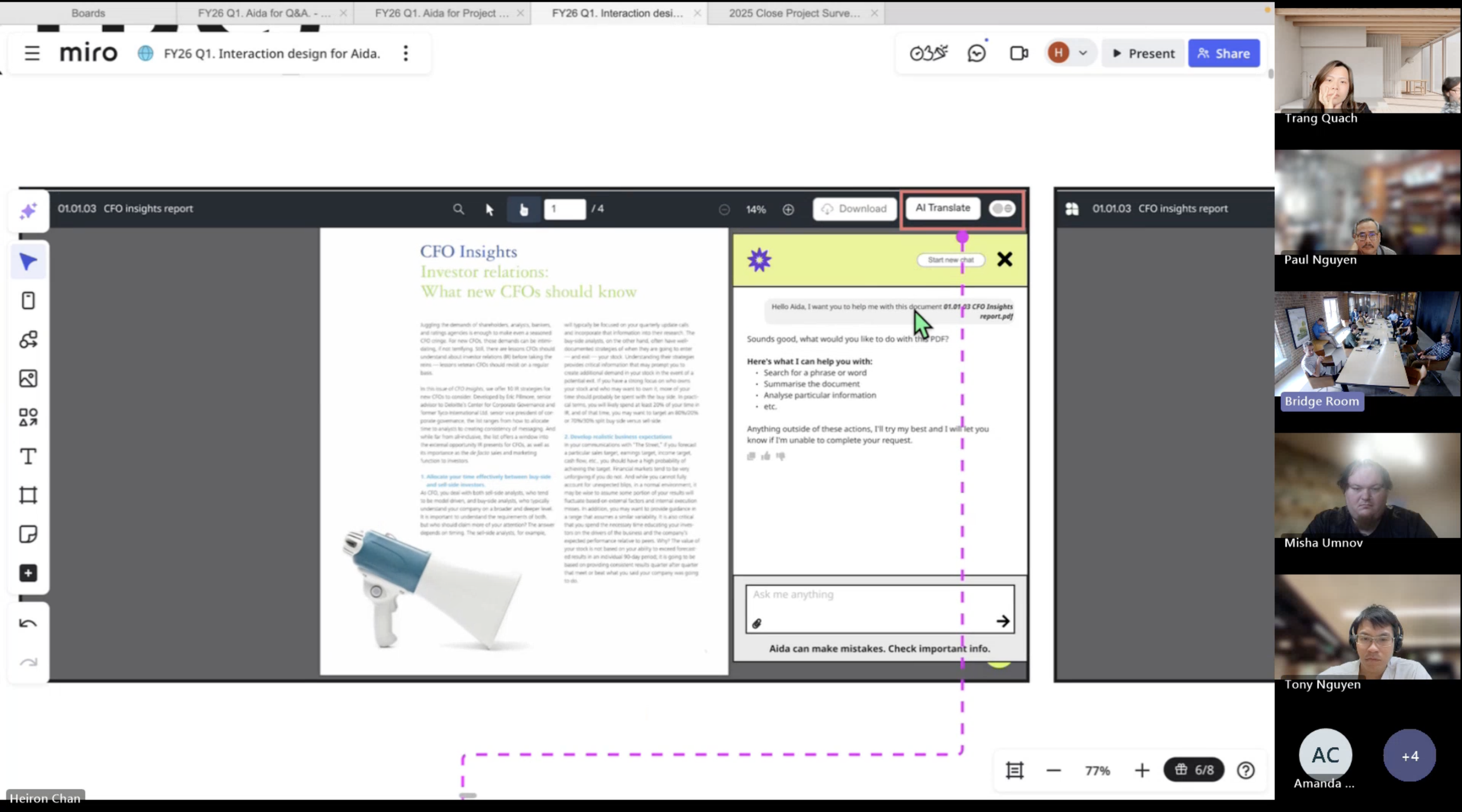

In the Document Index, Aida helps users get on-demand insights as they upload and review files in preparation for due diligence. She’s animated, speaks with wit and is anchored to the main navigation menu.

Supported with guided prompts on top of general chat, users can quickly surface the information they need without leaving their workflow.

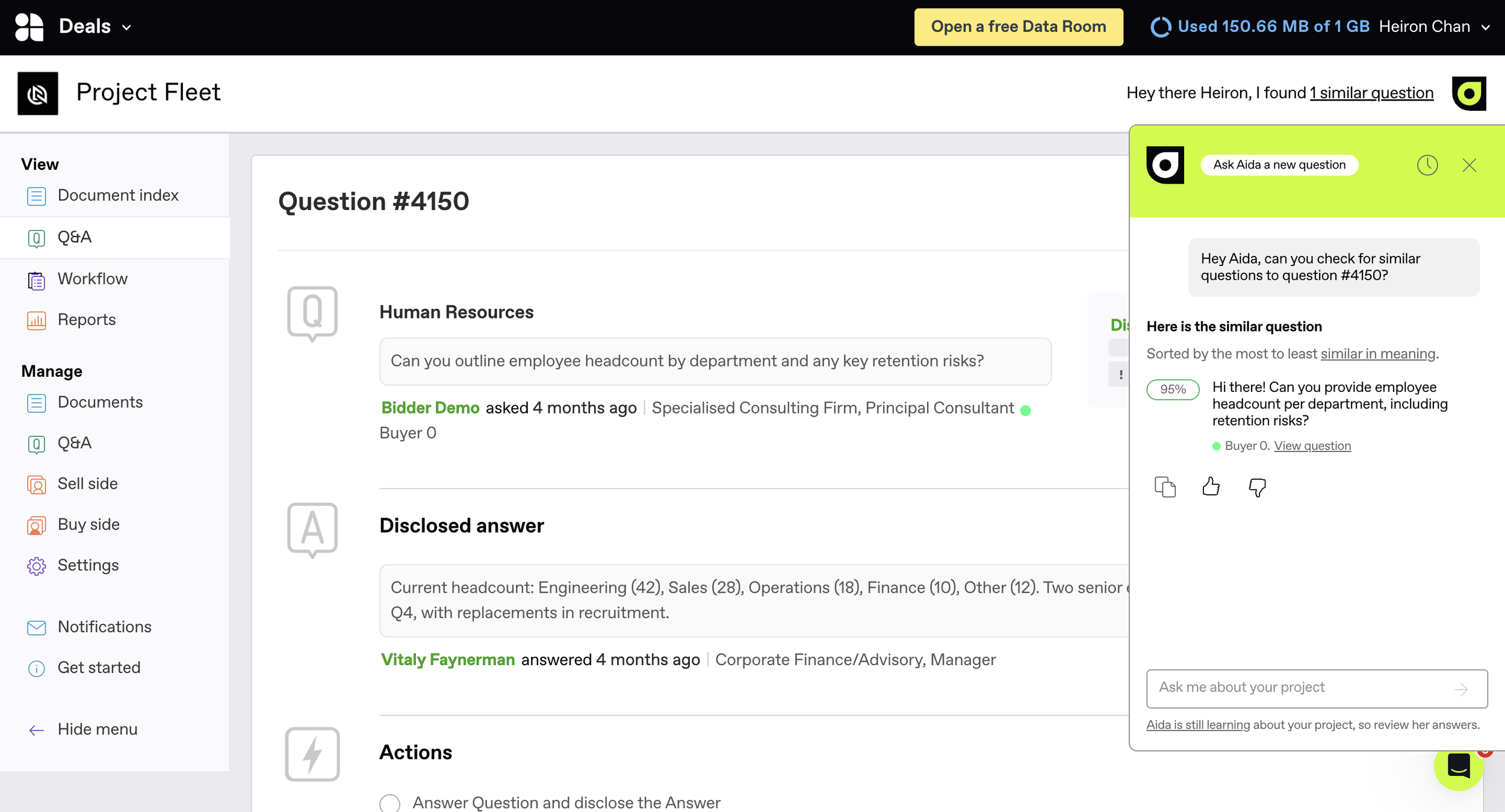

We also integrated Aida into Q&A, one of the most critical and time-consuming features of the data room where sellers and buyers communicate for due diligence.

Here, Aida helps identify similar questions and generate draft answers, reducing duplicate work for all involved and speeds up the back-and-forth between during a deal.

In our upcoming January release, I’m extending Ansarada’s proprietary machine learning algorithm trained on 1000’s of deals ran in Ansarada, to the project dash.

Here our team and I are helping senior executives, like CEO’s and CFO’s predict and track the likelihood of their deal succeeding. Although not focused on Aida, this update is a parallel AI project to drive engagement into the data room and with Aida once our customers are logged in.